DEFEAT CAPITALISM AND ITS DEADLY SPAWN, IMPERIALISM

ecological murder • endless wars • ingrained racism & social injustice • worker exploitation • incurable via reforms

While most honest writers have at least acknowledged the obstacles to commercially-scaled fusion, they typically still underestimate them – as much so today as back in the 1980s. We are told that a fusion reaction would have to occur “many times a second” to produce usable amounts of energy. But the blast of energy from the LLNL fusion reactor actually only lasted one-tenth of a nanosecond – that’s a ten-billionth of a second. Apparently, other fusion reactions (with a net energy loss) have operated for a few nanoseconds, but reproducing this reaction over a billion times every second is far beyond what researchers are even contemplating.

We are told that the reactor produced about 1.5 times the amount of energy that was input, but this only counts the laser energy that actually struck the reactor vessel. That energy, which is necessary to generate temperatures over a hundred million degrees, was the product of an array of 192 high-powered lasers, which required well over 100 times as much energy to operate. Third, we are told that nuclear fusion will someday free up vast areas of land that are currently needed to operate solar and wind power installations. But the entire facility needed to house the 192 lasers and all the other  necessary control equipment was large enough to contain three football fields, even though the actual fusion reaction takes place in a gold or diamond vessel smaller than a pea. All this just to generate the equivalent of about 10-20 minutes of energy that is used by a typical small home. Clearly, even the most inexpensive rooftop solar systems can already do far more. And Prof. Mark Jacobson’s group at Stanford University has calculated that a total conversion to wind, water and solar power might use about as much land as is currently occupied by the world’s fossil fuel infrastructure.

necessary control equipment was large enough to contain three football fields, even though the actual fusion reaction takes place in a gold or diamond vessel smaller than a pea. All this just to generate the equivalent of about 10-20 minutes of energy that is used by a typical small home. Clearly, even the most inexpensive rooftop solar systems can already do far more. And Prof. Mark Jacobson’s group at Stanford University has calculated that a total conversion to wind, water and solar power might use about as much land as is currently occupied by the world’s fossil fuel infrastructure.

Long-time nuclear critic Karl Grossman wrote on CounterPunch recently of the many likely obstacles to scaling up fusion reactors, even in principle, including high radioactivity, rapid corrosion of equipment, excessive water demands for cooling, and the likely breakdown of components that would need to operate at unfathomably high temperatures and pressures. His main source on these issues is Dr. Daniel Jassby, who headed Princeton’s pioneering fusion research lab for 25 years. The Princeton lab, along with researchers in Europe, has led the development of a more common device for achieving nuclear fusion reactions, a doughnut-shaped or spherical vessel known as a tokamak. Tokamaks, which contain much larger volumes of highly ionized gas (actually a plasma, a fundamentally different state of matter), have achieved substantially more voluminous fusion reactions for several seconds at a time, but have never come close to producing more energy than is injected into the reactor.

The laser-mediated fusion reaction achieved at LBL occurred at a lab called the National Ignition Facility, which touts its work on fusion for energy, but is primarily dedicated to nuclear weapons research. Prof. M. V. Ramana of the University of British Columbia, whose recent article was posted on the newly revived ZNetwork, explains, “NIF was set up as part of the Science Based Stockpile Stewardship Program, which was the ransom paid to the US nuclear weapons laboratories for forgoing the right to test after the United States signed the Comprehensive Test Ban Treaty” in 1996. It is “a way to continue investment into modernizing nuclear weapons, albeit without explosive tests, and dressing it up as a means to produce ‘clean’ energy.” Ramana cites a 1998 article that explained how one aim of laser fusion experiments is to try to develop a hydrogen bomb that doesn’t require a conventional fission bomb to ignite it, potentially eliminating the need for highly enriched uranium or plutonium in nuclear weapons.

While some writers predict a future of nuclear fusion reactors running on seawater, the actual fuel for both tokamaks and laser fusion experiments consists of two unique isotopes of hydrogen known as deuterium – which has an extra neutron in its nucleus – and tritium – with two extra neutrons. Deuterium is stable and somewhat common: approximately one out of every 5-6000 hydrogen atoms in seawater is actually deuterium, and it is a necessary ingredient (as a component of “heavy water”) in conventional nuclear reactors. Tritium, however, is radioactive, with a half-life of twelve years, and is typically a costly byproduct ($30,000 per gram) of an unusual type of nuclear reactor known as CANDU, mainly found today in Canada and South Korea. With half the operating CANDU reactors scheduled for retirement this decade, available tritium supplies will likely peak before 2030 and a new experimental fusion facility under construction in France will nearly exhaust the available supply in the early 2050s. That is the conclusion of a highly revealing article that appeared in Science magazine last June, months before the latest fusion breakthrough. While the Princeton lab has made some progress toward potentially recycling tritium, fusion researchers remain highly dependent on rapidly diminishing supplies. Alternative fuels for fusion reactors are also under development, based on radioactive helium or boron, but these require temperatures up to a billion degrees to trigger a fusion reaction. The European lab plans to experiment with new ways of generating tritium, but these also significantly increase the radioactivity of the entire process and a tritium gain of only 5 to 15 percent is anticipated. The more downtime between experimental runs, the less tritium it will produce. The Science article quotes D. Jassby, formerly of the Princeton fusion lab, saying that the tritium supply issue essentially “makes deuterium-tritium fusion reactors impossible.”

So why all this attention toward the imagined potential for fusion energy? It is yet another attempt by those who believe that only a mega-scaled, technology-intensive approach can be a viable alternative to our current fossil fuel-dependent energy infrastructure. Some of the same interests continue to promote the false claims that a “new generation” of nuclear fission reactors will solve the persistent problems with nuclear power, or that massive-scale capture and burial of carbon dioxide from fossil-fueled power plants will make it possible to perpetuate the fossil-based economy far into the future. It is beyond the scope of this article to systematically address those claims, but it is clear that today’s promises for a new generation of “advanced” reactors is not much different from what we were hearing back in the 1980s, ‘90s or early 2000s.

Nuclear whistleblower Arnie Gundersen has systematically exposed the flaws in the ‘new’ reactor design currently favored by Bill Gates, explaining that the underlying sodium-cooled technology is the same as in the reactor that “almost lost Detroit” due to a partial meltdown back in 1966, and has repeatedly caused problems in Tennessee, France and Japan. France’s nuclear energy infrastructure, which has long been touted as a model for the future, is increasingly plagued by equipment problems, massive cost overruns and some sources of cooling water no longer being cool enough, due to rising global temperatures. An attempt to export French nuclear technology to Finland took more than twenty years longer than anticipated, at many times the original estimated cost. As for carbon capture, we know that countless, highly subsidized carbon capture experiments have failed and that the vast majority of the CO2 currently captured from power plants is used for “enhanced oil recovery,” i.e. increasing the efficiency of existing oil wells. The pipelines that would be needed to actually collect CO2 and bury it underground would be comparable to the entire current infrastructure for piping oil and gas, and the notion of permanent burial will likely prove to be a pipedream.

Meanwhile, we know that new solar and wind power facilities are already cheaper to build than new fossil fueled power plants and in some locations are even less costly than continuing to operate existing power plants. Last May, California was briefly able to run its entire electricity grid on renewable energy, a milestone that had already been achieved in Denmark and in South Australia. And we know that a variety of energy storage methods, combined with sophisticated load management and upgrades to transmission infrastructure are already helping solve the problem of intermittency of solar and wind energy in Europe, California and other locations. At the same time, awareness is growing about the increasing reliance of renewable technology, including advanced batteries, on minerals extracted from Indigenous lands and the global South. Thus a meaningfully just energy transition needs to both be fully renewable, and also reject the myths of perpetual growth that emerged from the fossil fuel era. If the end of the fossil fuel era portends the end of capitalist growth in all its forms, it is clear that all of life on earth will ultimately be the beneficiary.

The opinions expressed in these articles are the author’s own, and may not represent this publication’s specific position.

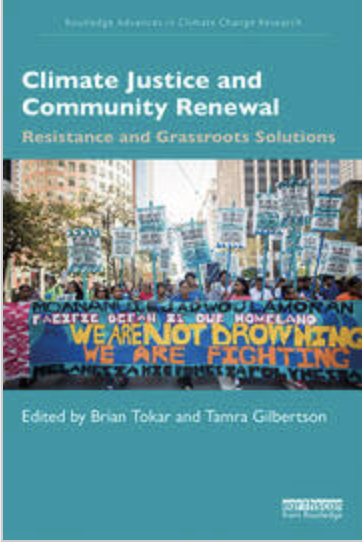

Brian Tokar is the co-editor (with Tamra Gilbertson) of Climate Justice and Community Renewal: Resistance and Grassroots Solutions (Routledge 2020) and the author and editor of six previous books on environmental issues and movements, including Toward Climate Justice: Perspectives on the Climate Crisis and Social Change (New Compass 2014). He is a lecturer in Environmental Studies at the University of Vermont and a long-term faculty and board member of the Vermont-based Institute for Social Ecology.

Brian Tokar is the co-editor (with Tamra Gilbertson) of Climate Justice and Community Renewal: Resistance and Grassroots Solutions (Routledge 2020) and the author and editor of six previous books on environmental issues and movements, including Toward Climate Justice: Perspectives on the Climate Crisis and Social Change (New Compass 2014). He is a lecturer in Environmental Studies at the University of Vermont and a long-term faculty and board member of the Vermont-based Institute for Social Ecology.

Print this article [bws_pdfprint display=’print’]

Unfortunately, most people take this site for granted.

DONATIONS HAVE ALMOST DRIED UP…

PLEASE send what you can today!

JUST USE THE BUTTON BELOW

[/su_spoiler]

![]() Don’t forget to sign up for our FREE bulletin. Get The Greanville Post in your mailbox every few days.

Don’t forget to sign up for our FREE bulletin. Get The Greanville Post in your mailbox every few days.

[newsletter_form]

[premium_newsticker id=”211406″]

![]()

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License